Artificial Intelligence (AI) has revolutionized numerous sectors, from healthcare to finance, and continues to integrate deeper into our daily lives. One of the most powerful tools is Decision Tree in Artificial Intelligence algorithm. This blog post will delve into the intricacies of Decision Trees, their advantages and disadvantages, and their practical applications in AI.

What is a Decision Tree?

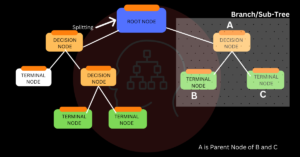

A decision tree is a structure that resembles a flowchart, where each internal node represents a test on an attribute, each branch a test result, and each leaf node a class label. A decision tree’s root node is located at the topmost node. It gains the ability to partition according to attribute value. It partitions recursively in a manner called recursive partitioning.

This flowchart-like structure helps you make decisions. It’s called a ‘Decision Tree’ because, similar to a tree in nature, it starts from the root, makes decisions at each internal node, and ends at the leaves.

How Does a Decision Tree Work?

A tree’s accuracy greatly depends on its decision about when to make strategic splits. Regression and classification trees have distinct decision criteria. Decision trees use a variety of techniques to determine whether to split a node into two or more sub-nodes. The homogeneity of newly formed sub-nodes is increased by sub-node formation. In other words, we may claim that the node’s purity improves in relation to the desired variable. The nodes are divided by all of the relevant variables in a decision tree, and the split that produces the most homogenous sub-nodes is chosen.

The kind of target variables is taken into consideration when choosing the algorithm. Let’s examine the decision tree algorithms that are most frequently applied:

ID3 (Iterative Dichotomizer 3): It uses the entropy function and Information gain as metrics.

C4.5: It is an extension of the ID3 algorithm, and it uses a set of rules to handle the overfitting of data and missing values.

CART (Classification and Regression Trees): It uses the Gini Index as a metric.

CHAID (Chi-square Automatic Interaction Detector): When computing classification trees, it performs multi-level splits. It uses the Chi-square test to determine the best next split at each step.

MARS: It is used for regression tasks. It builds a model in the form of an expansion of basis functions.

Advantages of Decision Tree in Artificial Intelligence

Easy to Understand and Interpret: Decision Trees are simple to understand as they mimic human thinking ability. They can be visualized, which makes them easy to interpret.

Requires Little Data Preparation: Unlike other algorithms, Decision Trees do not require normalization of data or handling of missing values.

Able to Handle Multi-Output Problems: Decision Trees can handle both numerical and categorical variables.

Utilizes a White Box Model: When a given situation can be observed in a model, boolean logic can be used to explain the condition with ease.

Performs Well with Large Datasets: Large amounts of data can be analyzed using standard computing resources in reasonable time.

Disadvantages of Decision Trees

Overfitting: Decision Trees can create complex trees that do not generalize well from the training data to unseen data, a problem known as overfitting.

Unstable: Small variations in the data can result in a different decision tree. This can be mitigated by using decision trees within an ensemble.

Cannot Ensure the Return of the Globally Optimal Decision tree: This can be avoided by training multiple trees in an ensemble learner, where the features and samples are sampled at random and replaced.

Biased Trees: If some classes dominate, decision tree learners can create biased trees. So before fitting the dataset to the decision tree, it is advised to balance it.

Practical Applications of Decision Trees

Decision Trees have a myriad of practical applications in various fields, such as:

Business Management: To determine the approach most likely to succeed, decision trees are used in decision analysis.

Customer Relationship Management: Decision Trees can help in segmenting customers and predicting future prospects.

Fraudulent Statement Detection: Decision Trees are used for the detection of fraudulent financial statements.

Healthcare Management: Decision Trees are used to assist in the management of hospitals and healthcare.

Fault Diagnosis: Decision Trees are used in system fault diagnosis.

Conclusion

Supervised Machine Learning techniques such as decision trees involve continuously segmenting the data based on a particular parameter. They offer a highly effective way of making decisions because they mimic human thinking abilities. While they have their disadvantages, such as a tendency to overfit and instability, these can be mitigated through techniques like ensemble learning. Decision Trees find their applications in various domains and are a crucial part of any data scientist’s toolkit.

FAQs

1. What is a Decision Tree in Artificial Intelligence?

When making decisions, a decision tree is a framework analogous to a flowchart. It takes judgments at each internal node along the way, finishing at the leaves, starting from a root node. Every internal node represents a test on an attribute, every branch a test result, and every leaf node a class label.

2. How does a Decision Tree work?

A Decision Tree works by splitting nodes into two or more sub-nodes based on certain criteria. The decision to split is based on different algorithms, with the aim of increasing the homogeneity or purity of the resultant sub-nodes with respect to the target variable.

3. What are the advantages of Decision Trees?

Some advantages of Decision Trees include their simplicity and ease of interpretation, their ability to handle both numerical and categorical variables, and their performance with large datasets. They also require little data preparation and can handle multi-output problems.

4. What are the disadvantages of Decision Trees?

Decision Trees can overfit, creating complex trees that do not generalize well from the training data to unseen data. They can also be unstable, with small variations in the data resulting in a different decision tree. Additionally, they cannot guarantee to return the globally optimal decision tree and can create biased trees if some classes dominate.

5. Where are Decision Trees used?

Decision Trees have a wide range of applications, including in business management for decision analysis, in customer relationship management for customer segmentation and prediction, in fraudulent statement detection, in healthcare management, and in system fault diagnosis.

6. What are some commonly used algorithms in Decision Trees?

Some commonly used algorithms in Decision Trees include ID3, C4.5, CART, CHAID, and MARS. These algorithms use different metrics and methods to decide how to split a node into sub-nodes.

7. How can the disadvantages of Decision Trees be mitigated?

The disadvantages of Decision Trees can be mitigated by using decision trees within an ensemble to stabilize the model, by training multiple trees where the features and samples are randomly sampled with replacement, and by balancing the dataset prior to fitting with the decision tree.